-

Notifications

You must be signed in to change notification settings - Fork 6.6k

chore(deps): update dependency torch to v2.8.0 [security] #13405

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

base: main

Are you sure you want to change the base?

chore(deps): update dependency torch to v2.8.0 [security] #13405

Conversation

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Hello @renovate-bot, I'm Gemini Code Assist1! I'm currently reviewing this pull request and will post my feedback shortly. In the meantime, here's a summary to help you and other reviewers quickly get up to speed!

Summary of Changes

Hello team, gemini-code-assist here to provide a summary of this pull request. This PR, opened by renovate-bot, aims to update the torch dependency in the dataflow/run-inference/requirements.txt file. The primary motivation for this update is to address recently disclosed security vulnerabilities in older versions of torch, specifically CVE-2025-32434 (a Remote Command Execution vulnerability) and CVE-2025-2953 (a Denial of Service vulnerability). The PR updates the version from 2.2.2 to 2.7.1 to incorporate the necessary security fixes.

Highlights

- Security Fixes: This update is crucial as it includes fixes for significant security vulnerabilities (CVE-2025-32434 and CVE-2025-2953) found in previous versions of

torch.

Changelog

- dataflow/run-inference/requirements.txt

- Updated the

torchdependency from version2.2.2to2.7.1.

- Updated the

Using Gemini Code Assist

The full guide for Gemini Code Assist can be found on our documentation page, here are some quick tips.

Invoking Gemini

You can request assistance from Gemini at any point in your pull request via creating an issue comment (i.e. comment on the pull request page) using either /gemini <command> or @gemini-code-assist <command>. Below is a summary of the supported commands.

| Feature | Command | Description |

|---|---|---|

| Code Review | /gemini review |

Performs a code review for the current pull request in its current state. |

| Pull Request Summary | /gemini summary |

Provides a summary of the current pull request in its current state. |

| Comment | @gemini-code-assist | Responds in comments when explicitly tagged, both in issue comments and review comments. |

| Help | /gemini help |

Displays a list of available commands. |

Customization

To customize Gemini Code Assist for GitHub experience, repository maintainers can create a configuration file and/or provide a custom code review style guide (such as PEP-8 for Python) by creating and adding files to a .gemini/ folder in the base of the repository. Detailed instructions can be found here.

Limitations & Feedback

Gemini Code Assist is currently in preview and may make mistakes. Please leave feedback on any instances where its feedback is incorrect or counter productive. You can react with 👍 and 👎 on @gemini-code-assist comments to provide feedback.

You can also get AI-powered code generation, chat, as well as code reviews directly in the IDE at no cost with the Gemini Code Assist IDE Extension.

Dependency update,

Fixing bugs, making code safe,

Security patch.

Footnotes

-

Review the Privacy Notices, Generative AI Prohibited Use Policy, Terms of Service, and learn how to configure Gemini Code Assist in GitHub here. Gemini can make mistakes, so double check it and use code with caution. ↩

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Code Review

This pull request updates the torch dependency from version 2.2.2 to 2.7.1. This is an important update as it addresses two security vulnerabilities: CVE-2025-32434 (Remote Command Execution in torch.load) and CVE-2025-2953 (Denial of Service in torch.mkldnn_max_pool2d).

The change is confined to the requirements.txt file and correctly updates the version string. The Renovate bot's compatibility checks indicate that this new version should be compatible with the existing dependencies, which is reassuring.

Given the security implications of the older torch version, this update is highly recommended. The code in main.py (via Apache Beam's PytorchModelHandlerTensor) and download_model.py involves model loading and saving, so ensuring PyTorch is up-to-date with security patches is crucial.

Overall, this is a beneficial and necessary update.

Summary of Findings

- Security Vulnerability Remediation: The primary purpose of this PR is to update

torchto v2.7.1, which addresses critical security vulnerabilities (CVE-2025-32434 and CVE-2025-2953) present in the previous version (v2.2.2). This is a crucial improvement for the security posture of the application. - Dependency Update: The

torchdependency has been updated from2.2.2to2.7.1indataflow/run-inference/requirements.txt. This change is correctly implemented.

Merge Readiness

This pull request directly addresses known security vulnerabilities by updating the torch library. The change is minimal and appears to be compatible according to automated checks. I recommend merging this PR to enhance the security of the project. As I am an AI assistant, I am not authorized to approve pull requests; please ensure it undergoes any further necessary human review and testing procedures before merging.

8976e2a to

54dff54

Compare

54dff54 to

c3c3982

Compare

c3c3982 to

6fd487d

Compare

6fd487d to

5010020

Compare

5010020 to

c062b4a

Compare

c062b4a to

9e87ef8

Compare

9e87ef8 to

289f877

Compare

f671410 to

78b9d10

Compare

78b9d10 to

ab41ea6

Compare

ab41ea6 to

171044b

Compare

171044b to

3c03ad1

Compare

3c03ad1 to

3dee47a

Compare

3dee47a to

c4d4ec2

Compare

c4d4ec2 to

a6895ae

Compare

a6895ae to

5495031

Compare

5495031 to

fbeb5b4

Compare

This PR contains the following updates:

==2.2.2->==2.8.0GitHub Vulnerability Alerts

CVE-2025-2953

A vulnerability, which was classified as problematic, has been found in PyTorch 2.6.0+cu124. Affected by this issue is the function torch.mkldnn_max_pool2d. The manipulation leads to denial of service. An attack has to be approached locally. The exploit has been disclosed to the public and may be used.

CVE-2025-3730

A vulnerability, which was classified as problematic, was found in PyTorch 2.6.0. Affected is the function torch.nn.functional.ctc_loss of the file aten/src/ATen/native/LossCTC.cpp. The manipulation leads to denial of service. An attack has to be approached locally. The exploit has been disclosed to the public and may be used. The name of the patch is 46fc5d8e360127361211cb237d5f9eef0223e567. It is recommended to apply a patch to fix this issue.

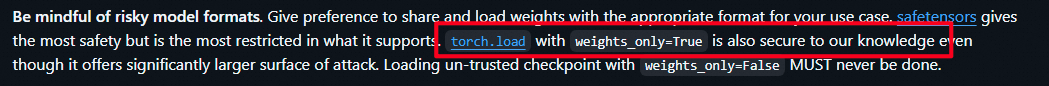

CVE-2025-32434

Description

I found a Remote Command Execution (RCE) vulnerability in PyTorch. When loading model using torch.load with weights_only=True, it can still achieve RCE.

Background knowledge

https://github.com/pytorch/pytorch/security

As you can see, the PyTorch official documentation considers using

torch.load()withweights_only=Trueto be safe.Since everyone knows that weights_only=False is unsafe, so they will use the weights_only=True to mitigate the seucirty issue.

But now, I just proved that even if you use weights_only=True, it can still achieve RCE.

Credit

This vulnerability was found by Ji'an Zhou.

Release Notes

pytorch/pytorch (torch)

v2.8.0: PyTorch 2.8.0 ReleaseCompare Source

PyTorch 2.8.0 Release Notes

Highlights

For more details about these highlighted features, you can look at the release blogpost.

Below are the full release notes for this release.

Tracked Regressions

Windows wheel builds with CUDA 12.9.1 stack overflow during build (#156181)

Due to a bug introduced in CUDA 12.9.1, we are unable to complete full Windows wheel builds with this

version, as compilation of

torch.segment_reduce()crashes the build. Thus, we provide a wheelwithout

torch.segment_reduce()included in order to sidestep the issue. If you need supportfor

torch.segment_reduce(), please utilize a different version.Backwards Incompatible Changes

CUDA Support

Removed support for Maxwell and Pascal architectures with CUDA 12.8 and 12.9 builds (#157517, #158478, #158744)

Due to binary size limitations, support for sm50 - sm60 architectures with CUDA 12.8 and 12.9 has

been dropped for the 2.8.0 release. If you need support for these architectures, please utilize

CUDA 12.6 instead.

Python Frontend

Calling an op with an input dtype that is unsupported now raises

NotImplementedErrorinstead ofRuntimeError(#155470)Please update exception handling logic to reflect this.

In 2.7.0

In 2.8.0

Added missing in-place on view check to custom

autograd.Function(#153094)In 2.8.0, if a custom

autograd.Functionmutates a view of a leaf requiring grad,it now properly raises an error. Previously, it would silently leak memory.

Output:

Version 2.7.0

Version 2.8.0

An error is now properly thrown for the out variant of

tensordotwhen called with arequires_grad=Truetensor (#150270)Please avoid passing an out tensor with

requires_grad=Trueas gradients cannot becomputed for this tensor.

In 2.7.0

In 2.8.0

torch.compile

Specialization of a tensor shape with

mark_dynamicapplied now correctly errors (#152661)Prior to 2.8, it was possible for a guard on a symbolic shape to be incorrectly

omitted if the symbolic shape evaluation was previously tested with guards

suppressed (this often happens within the compiler itself). This has been fixed

in 2.8 and usually will just silently "do the right thing" and add the correct

guard. However, if the new guard causes a tensor marked with

mark_dynamicto becomespecialized, this can result in an error. One workaround is to use

maybe_mark_dynamicinstead ofmark_dynamic.See the discussion in issue #157921 for more

context.

Version 2.7.0

Version 2.8.0

Several config variables related to

torch.compilehave been renamed or removedenable_cpp_framelocals_guard_evalhas changed to no longer have any effect (#151008).rocm.n_max_profiling_configsis deprecated (#152341).Instead, use ck-tile based configs

rocm.ck_max_profiling_configsandrocm.ck_tile_max_profiling_configs.autotune_fallback_to_atenis deprecated (#154331).Inductor will no longer silently fall back to

ATen. Please add"ATEN"tomax_autotune_gemm_backendsfor the old behavior.use_mixed_mmandmixed_mm_choiceare deprecated (#152071). Inductor now supports prologue fusion, so there is no need forspecial cases now.

descriptive_names = Falseis deprecated (#151481). Please use one of the other availableoptions:

"torch","original_aten", or"inductor_node".custom_op_default_layout_constrainthas moved from inductor config to functorch config (#148104). Please reference it viatorch._functorch.config.custom_op_default_layout_constraintinstead oftorch._inductor.config.custom_op_default_layout_constraint.emit_current_arch_binaryis deprecated (#155768).aot_inductor.embed_cubinhas been renamed toaot_inductor.embed_kernel_binary(#154412).aot_inductor.compile_wrapper_with_O0has been renamed tocompile_wrapper_opt_level(#148714).Added a stricter aliasing/mutation check for

HigherOrderOperators (e.g.cond), which will explicitly error out if alias/mutation among inputs and outputs is unsupported (#148953, #146658).For affected

HigherOrderOperators, add.clone()to aliased outputs to address this.Version 2.7.0

Version 2.8.0

guard_or_xanddefinitely_xhave been consolidated (#152463)We removed

definitely_true/definitely_falseand associated APIs, replacing them withguard_or_true/guard_or_false, which offer similar functionality and can be used toachieve the same effect. Please migrate to the latter.

Version 2.7.0

Version 2.8.0

torch.export

torch.export.export_for_inferencehas been removed in favor oftorch.export.export_for_training().run_decompositions()(#149078)Version 2.7.0

Version 2.8.0

Switched default to

strict=Falseintorch.export.exportandexport_for_training(#148790, #150941)This differs from the previous release default of

strict=True. To revert to the old defaultbehavior, please explicitly pass

strict=True.Version 2.7.0

Version 2.8.0

ONNX

Default opset in

torch.onnx.exportis now 18 (#156023)When

dynamo=False, the default ONNX opset version has been updated from 17 to 18. Users can setopset_versionto explicitly select an opset version.Version 2.7

Version 2.8

The

JitTraceConvertStrategyhas been removed (#152556)Support for JIT traced and scripted modules in the ONNX exporter when

dynamo=Truehas been removed. You are encouraged to export an nn.Module directly, or create anExportedProgramusingtorch.exportbefore exporting to ONNX.onnxscript>=0.3.1is required for thedynamo=Trueoption (#157017)You must upgrade

onnxscriptto version 0.3.1 or higher for it to be compatible with PyTorch 2.8.Build Frontend

Removed the

torch/types.hinclude fromDispatcher.h(#149557)This can cause build errors in C++ code that implicitly relies on this include (e.g. very old versions of

torchvision).Note that

Dispatcher.hdoes not belong as an include fromtorch/types.hand was only present as ashort-term hack to appease

torchvision. If you run intotorchvisionbuild errors, pleaseupdate to a more recent version of

torchvisionto resolve this.Upgraded

DLPackto 1.0 (#145000)As part of the upgrade, some of the

DLDeviceTypeenum values have been renamed. Please switchto the new names.

Version 2.7.0

Version 2.8.0

NVTX3 code has been moved from

cmake/public/cuda.cmaketocmake/Dependencies.cmake(#151583)This is a BC-breaking change for the build system interface. Downstream projects that previously got NVTX3 through

cmake/public/cuda.cmake(i.e.. calling

find_package(TORCH REQUIRED)) will now need to explicitly configure NVTX3 support in the library itself (i.e. useUSE_SYSTEM_NVTX=1).The change is to fix the broken behavior where downstream projects couldn't find NVTX3 anyway due to the

PROJECT_SOURCE_DIRmismatch.Version 2.7.0:

-DUSE_SYSTEM_NVTXwould be able to find NVTX3 andtorch::nvtx3via PyTorch'scmake/public/cuda.cmakelogic.-DUSE_SYSTEM_NVTXwould encounter build errors with CUDA 12.8 or above.Version 2.8.0:

-DUSE_SYSTEM_NVTXwill not be able to find NVTX3 ortorch::nvtx3via PyTorch'scmake/public/cuda.cmake. The downstream project now needs to explicitly find NVTX3 and torch::nvtx3 by implementing the same logic in PyTorch'scmake/Dependences.cmake.-DUSE_SYSTEM_NVTXwill proceed building without NVTX unless another part of the build process re-enables NVTX.Deprecations

MPS support for MacOS Ventura will be removed in 2.9

PyTorch 2.8 is the last release that will support GPU acceleration on MacOS Ventura. In the next

release (2.9), MacOS Sonoma (released in Sept. 2023) or above will be required to use the MPS

backend.

torch.ao.quantizationis deprecated and will be removed in 2.10 (#153892)To migrate:

torch.ao.quantization.quantize,torch.ao.quantization.quantize_dynamic)torchaoeager modequantize_.torchaoPT2E quantization.torch.ao.quantization.quantize_fx.prepare_fx,torch.ao.quantization.quantize_fx.convert_fx): usetorchaoPT2E quantization (torchao.quantization.quantize_pt2e.prepare_pt2e,torchao.quantization.quantize_pt2e.convert_pt2e).Note that PT2E quantization has been migrated to

torchao(https://github.com/pytorch/ao/tree/main/torchao/quantization/pt2e). See pytorch/ao#2259 and https://docs.pytorch.org/ao/main/quick_start.html#pytorch-2-export-quantization for more details.The

dynamo=False(current default) option fortorch.onnx.exportis deprecated (#152478, #155580)The default will be

dynamo=Truestarting from PyTorch 2.9. You are encouraged to migrate to use thedynamo=Trueoption intorch.onnx.export. This flag makestorch.export.exportthe default export path, replacingTorchScript.To maintain the old behavior, set

dynamo=Falseexplicitly. You are encouraged to also experiment with thefallback=Trueoption that will make the exporter fall back to thedynamo=Falsepath if there are errors.New Features

CUDA

torch.compile

Dynamo

nested_compile_region(#156449)guard_filter_fn(#150936)dont_skip_tracingdecorator to skip over most Dynamoskipfilesrules (#150586)Inductor

torch.export

draft-export, an export variant designed to consistently produce a graph and generate a debugging report of issues encountered during tracing (#152637, #153219, #149465, #153627, #154190, #155744, #150876, #150948, #151051, #151065, #150809, #151797)Ahead-Of-Time Inductor (AOTI)

TorchBindobjects (#150196, #154265)aot_inductor.model_name_for_generated_filesfor specifying model name (#154129)MPS

MPSInductor:torch.compilefor Apple GPUs (#150121, #149342, #151449, #151754, #149687, #149180, #149221, #153598, #152788, #153787, #152214, #151152, #155891, #154578, #151272, #151288, #153997, #151871, #153362, #156566, #150661, #153582)ONNX

Added new strategy

draft_export(#147529, docs) to provide debugging information upon data-dependent / constraint errors when obtaining anExportedProgramwithtorch.onnx.exportAdded support for symbolic operators in the

dynamo=Trueexport path (#148905, #149678, #150038, docs). Two operatorstorch.onnx.ops.symbolicandtorch.onnx.ops.symbolic_multi_outare defined to allow you to create symbolic ONNX operators directly in your PyTorch models. You can use them in aforwardmethod:Python Frontend

Quantization

torch.float4_e2m1fn_x2dtype (#148791)XPU

Improvements

Build Frontend

TORCH_CUDA_ARCH_LIST(#152715, #155314)Composability

C++ Frontend

bicubicmode fortorch::nn::functional::grid_sample(#150817)CUDA

no_implicit_headersmode forload_inline()on custom CUDA extensions (#149480)cuDNN

Distributed

c10d

TCPStorewith clone and queuing features (#150966, #151045, #150969, #151485)getDefaultBackendmore fault tolerant without relying on exceptions (#149152)masterListenFdinTCPStoreLibUvBackend(#150215)TORCH_NCCL_USE_TENSOR_REGISTER_ALLOCATOR_HOOK(#150682)global_rankwhengroup_rankis used (#151373)ProcessGroupNCCLvia an unsafe API (#152496)needs_contiguous_stridestag in functional collective (#153399, #153523)split_groupto work with non-nccl backends (#152175)new_subgroups()by usingnew_subgroups_by_enumeration()(#153843)ProcessGroupNCCL(#153990)c10::Halffor gloo (#153862)get_process_group_ranks()to acceptgroup=None(#154902)init_process_groupsupport index-only device id (#156214)ProcessGroup(#151723)reduce_scatterandReduceOp::AVGinProcessGroupGloo(#149781, #149869)ProcessGroupNCCL(#152706)ibverbsbackend in gloo and enabled gloo CUDA when used with a backend that supportsGPUDirect(#153015, #153425, #153406)DeviceMesh

DistributedDataParallel (DDP)

use_python_reducerto C++ reducer (#152735)DistributedStateDict(DSD)write_sizein planner write items (#149699)DTensor

StridedShardsupport uneven sharding (#150490)torch.cumsum(#151071)DTensorredistributefwd/bwd datatype conversion to enableSimpleFSDPmixed precision training (#150740)torch.distributed.tensor.debug.visualize_sharding(#152027)FullyShardedDataParallel2 (FSDP2)

PrivateUse1backend in FSDP collectives and device type to pre forward hook (#147260, #149487)set_reshard_after_forward(#149103)reshard_after_forward=Truefor root model and kept root unsharded when not specifyingreshard_after_forward(#154704, #155319)all_reduce_eventonly if it's not CPU device (#150316)Pipeline Parallelism

get_pipeline_order()for Gpipe and 1F1B (#155935)ShardedTensor

ShardedTensorand recalculated metadata fromall_gather(#152583)TensorParallel

ParallelStyle PrepareModuleInputOutput(#150372)torchelastic

torch.compile

Dynamo

__torch_function__, andnamedtuplesubclasses (#153150, #149792, #153982)#151962, #149707, #149709,

#148799, #148801)

reasonfield totorch.compiler.disable(#150341)lru_cachewarnings for functions in the top-leveltorchnamespace (#157718)Inductor

aot_inductor.custom_ops_to_c_shimsandaot_inductor.custom_op_libs: allow for specifying custom op C shim (#153968)max_fusion_buffer_group_pairwise_attempts: limits fusions to specified node distance (#154688)cuda.cutlass_enabled_ops: controls CUTLASS operation selection (#155770)triton.cudagraph_capture_sizes: allows specifying certain shapes for which to capture CUDAGraphs; skips CUDAGraphs for other shapes (#156551)use_static_cuda_launcher: enables launching compiled triton statically to improve cold start times (#148890)assume_unaligned_fallback_output: allows inductor to track unaligned outputs (#150777)cuda.cutlass_tma_only: controls whether or not to only use TMA-compatible kernels in CUTLASS (#152815)static_launch_user_defined_triton_kernels: enables statically launching user defined triton kernels (#153725)precompilation_timeout_seconds: controls the timeout on precompilation (#153788)disable_decompose_k: disables newDecomposeKGEMM Kernels (#154421)min_num_split: sets the minimum number of splits in a split reduction (#155941)max_autotune_flex_search_space: allows specifying the size of the search space for flex attention autotuning (#156307)LOG_AUTOTUNE_RESULTSfor autotune log (#156254)torch.export

min,max,math.pow) (#151348)pytree.register_dataclass(#147752)jit.scripted functions in export (#155180)Ahead-Of-Time Inductor (AOTI)

num_runnerstoAOTIModelPackageLoader(#149364)FX

==(#150611)normalize_function(#143689)graph_code_verbose_logartifact for FX passes (#153775)fx.passes.split_moduleto normalize input names (#157793)Linear Algebra Frontend

cross(#154999)MPS

torch.specialoperations as well asindex_copy,hardshrink,rsub,col2im, andisin(#149174, #149203 #149123, #149368, #149378, #149563, #149687, #149705, #149783, #149407/#149680, #150279, #151754, #153786, #154326, #155304, #156263, #155382, #154010, #149816, #152282, #156090, #150060, #151600, #155002, #154671)index_putwith half precision floats (#151869)ConvTranspose3Dwith FP32 and complex (#154696)log1pandsigmoidwith int64 (#151791)Nested Tensor (NJT)

torch.nn

weight_normon CPU (#148878)ONNX

dynamo=True(#149901, #154596)Attention-23andRotaryEmbedding-23as native PyTorch ops (#156431, #156367, #154745)torch.scan(#154513)group_normsupport from opset 21 (#152138)asdictmethod toVerificationInfoclass (#151024)dynamic_shapesbehavior to usetorch.export.dim.DYNAMIC(#153065)sym_float,sym_not,sym_min,sym_max(#153200, #152111, #152196)Optimizer

TensorLRvariant for fused Adagrad on CPU (#153078)lr_lambdatype check inMultiplicativeLR(#151973)Profiler

Python Frontend

torch.AcceleratorError(#152023)Size.__radd__()(#152554)get_default_device()to also respecttorch.devicecontext manager (#148621)Quantization

mul/add/add_reluandbatch_norm2d), qconv1d-relu fusion, and lowering pass (#151112, #152411, #152811, #150751, #149708)torch.fused_moving_avg_obs_fake_quanton CUDA (#153699)Release Engineering

ROCm

cpp_extension(#152432)mm/bmm/addmm(#153262)Sparse Frontend

PrivateUse1extension (#149374)torch.func

torch.Tensor.scatter_add_(#150543),torch.matrix_exp(#155202)XPU

embed_cubinandmulti_arch_kernel_binaryoptions in AOTI for Intel GPU (#154514, #153924)UserDefineClass(#155787)Bug Fixes

Build Frontend

CMake-4.x(#150203)gcc-12+(#150847)/permissive-flag (#149035)Composability

torch.normfor scalar input (#144073)CPU (x86)

log_softmaxreduced-precision fp kernel (#156379)CUDA

torch.backends.cuda.matmul.allow_fp16_accumulationcrash when using cuBLASLt (#153083)AsyncMMon Blackwell (#153519)torch.cuda.MemPoolfor multithreaded use-cases (#153356)sum()on a default-constructed gamma / beta inlayer_norm(#156600)empty_cacheunder mempool context (#158180)Distributed

c10d

all_to_all(#149485)groupinput argument innew_subgroups()(#152765, #153798)Distributed Checkpointing (DCP)

broadcast_objectutil function (#155912)DistributedDataParallel (DDP)

DDPOptimizerissue on static tensor index (#155746)DTensor

local_mapwith multi-threading (#149070)new_local_tensorinredistributebe None case (#152303)Pipeline Parallelism

RPC

Configuration

📅 Schedule: Branch creation - At any time (no schedule defined), Automerge - At any time (no schedule defined).

🚦 Automerge: Disabled by config. Please merge this manually once you are satisfied.

♻ Rebasing: Never, or you tick the rebase/retry checkbox.

🔕 Ignore: Close this PR and you won't be reminded about this update again.

This PR was generated by Mend Renovate. View the repository job log.